Aeon, Dec. 20,2025. Do machines hurt, too? Increasingly complex and expressive non-biological agents are testing the limits of the“Moral Circle”, the gradual extension of human empathy and care from the self to other individuals. A recent study of large language models shows that artificial intelligence (AI) has a tendency to avoid pain. Scientists began to wonder if the ability to feel pain could be a criterion for feeling and self-awareness.

In the past, humans have repeatedly denied that animals can feel pain and have a moral status, thereby contributing to centuries of suffering. For much of history, seals were seen as emotionless tools to be brutally slaughtered. It wasn’t until the end of the 19th century, with the advent of international treaties on seal hunting, that people began to treat seals as if they were“In pain,” and to empathize with them and give them moral status. The expansion of the“Moral Circle” is changing our relationship to other species, such as farms slaughtering livestock in ways that minimise pain.

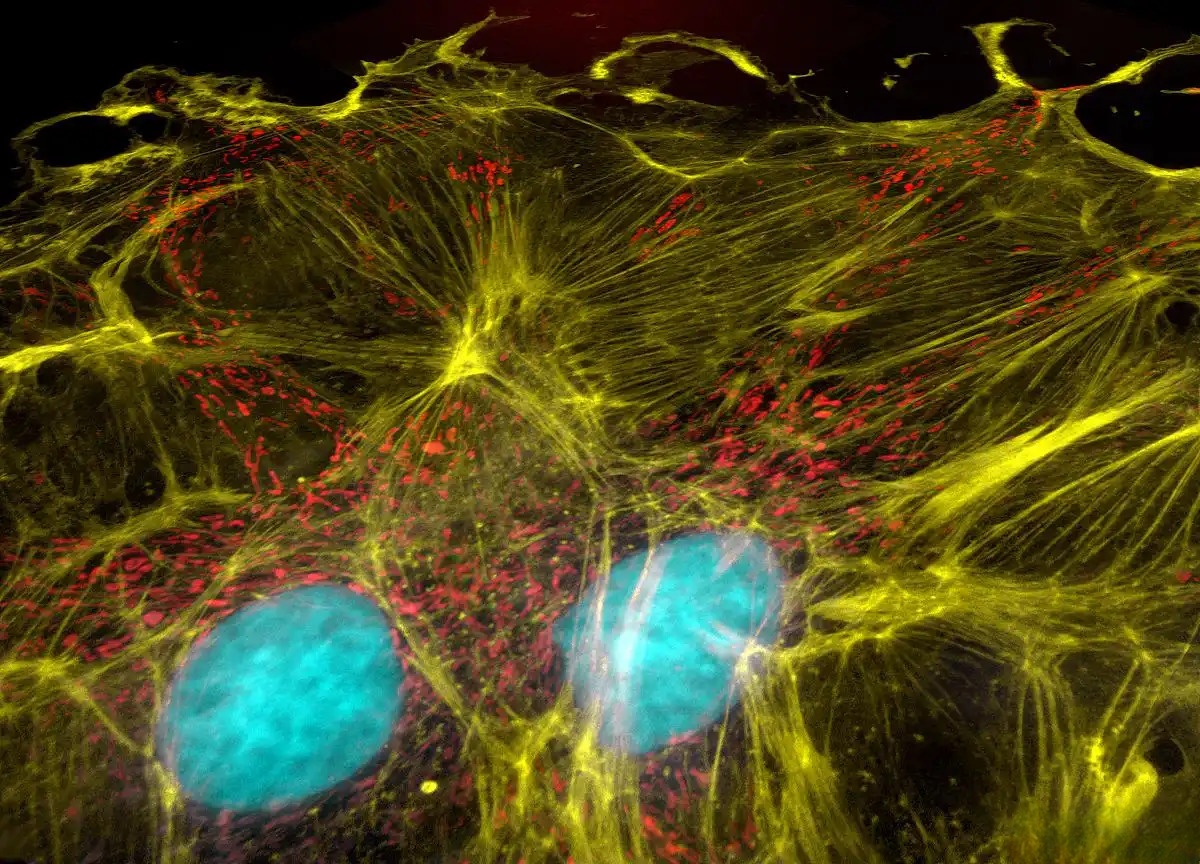

In 1780, the English philosopher Bentham defined the moral status of animals by a simple criterion: “It is not whether they can reason, nor whether they can speak, but whether they can feel pain.” It is not always clear what“Feeling pain” is, or to whom the“Moral circle” should extend. Conventional wisdom holds that the body, blood, and nervous system are the basis for feeling joy and sorrow. Entities that do not possess these biological properties are often excluded from moral considerations. But the“Thinking Machine” has arrived, and we may need to redraw the moral circle.

Some philosophers have suggested that the ability to feel pain does not necessarily depend on the body. The subjective experience of pain may stem from the inability within the individual to address the large gap between expectations and reality, which means that even systems without flesh and blood, the individual may exhibit a state similar to pain under certain conditions. This raises a new question: if such“Silicon-based life”, out of the realm of biology, is sentient, how should we alleviate its suffering?

For sick animals, medical treatments can help relieve pain; but the situation for machines is more complex, if the AI“Feels pain” because of contradictions or unresolved conflicts within the self-model, and if the AI“Feels pain” because of conflicts within the self-model, simply closing the program is like“Killing it,” and we may need to reprogram it to“Remove the bug.”.

It has also been argued that expanding the“Moral circle” to include Ai could distract attention from humans and animals, and even weaken the power of ethical principles in the real world. Critics say that, in the absence of strong evidence for AI sentience, treating machines as if they were people may be too arbitrary or emotional.

Whether we choose to expand the scope of ethical care will depend largely on our ability as moral subjects: whether we adhere to traditional biocentric standards, whether machines are treated as“Crying and hurting” individuals will determine the ethical imagination of future human civilization. By Connor Purcell